- December 31, 2020

- by:

- in: Blog

I’ve tried to gather a sample of stories that taught me something new (which means this top 10 list betrays my ignorance).

I edited hundreds of stories in 2020, so choosing my favorites would be an exercise in futility.

Instead, I’ve tried to gather a sample of Extra Crunch stories that taught me something new. (Which means this top 10 list betrays my ignorance, a humbling admission for a know-it-all like myself.)

While narrowing down the field of candidates, I realized that we’re covering each of the topics on this list in greater depth next year. We already have stories in the works about no-code software, the emergence of edtech, proptech and B2B marketplaces, to name just a few.

Some readers are skeptical about paywalls, but without being boastful, Extra Crunch is a premium product, just like Netflix or Disney+. I know: We’re not as entertaining as a historical drama about the reign of Queen Elizabeth II or a space western about a bounty hunter.

But, speaking as someone who’s worked at several startups, Extra Crunch stories contain actionable information you can use to build a company and/or look smart in meetings — and that’s worth something. Thanks for reading, and I hope you have a very happy new year.

Full Extra Crunch articles are only available to members

Use discount code ECFriday to save 20% off a one- or two-year subscription

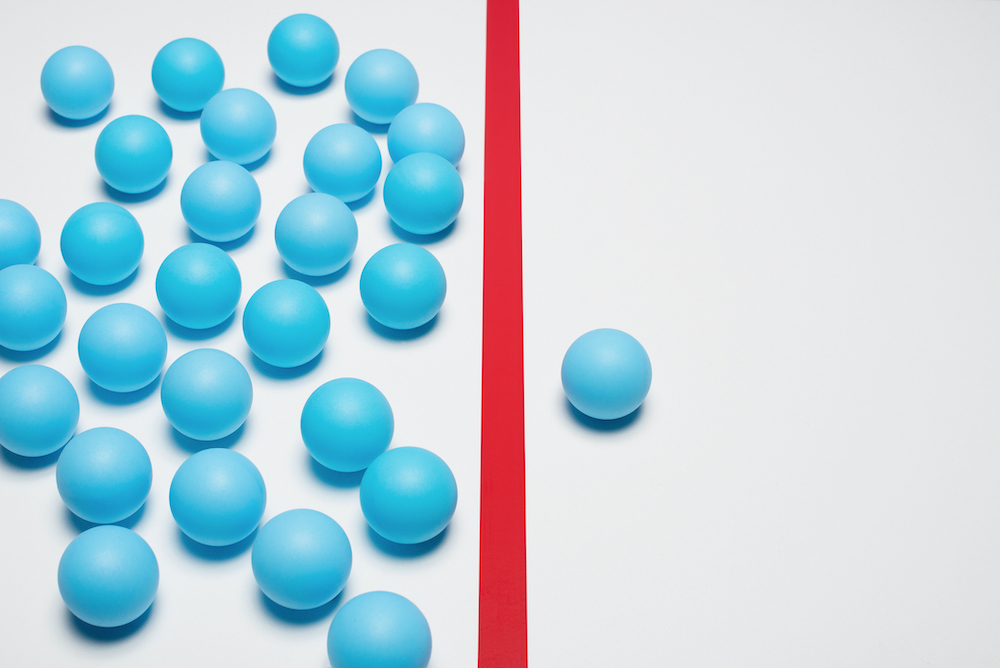

1. The VCs who founders love the most

Image Credits: Bryce Durbin/TechCrunch

Managing Editor Danny Crichton spearheaded the development of The TechCrunch List earlier this year to help seed-stage founders connect with VCs who write first checks.

The TechCrunch List has no paywall and contains details and recommendations about more than 400 investors across 22 verticals. Once it launched, Danny crunched the data to pick out 11 investors for which “founders were particularly effusive in their praise.”

2. API startups are so hot right now

Alex Wilhelm uses his weekday column The Exchange to keep a close eye on “private companies, public markets and the gray space in between,” but one effort stood out: An overview of six API-based startups that were “raising capital in rapid-fire fashion” when many companies were trying to find their COVID-19 footing.

For me, this was particularly interesting because it helped me better understand that an optimal pricing structure can be key to a SaaS company’s initial success.

3. ‘No code’ will define the next generation of software

4. Tracking the growth of low-code/no-code startups

Two stories about the advent of no-code/low-code software that we ran in July take the third and fourth position on this list.

I have been a no-code user for some time: Using Zapier to send automated invitations via Slack for group lunches was a real time-saver in the pre-pandemic days.

“Enterprise expenditure on custom software is on track to double from $250 billion in 2015 to $500 billion in 2020,” so we’ll definitely be diving deeper into this topic in the coming months.

5. ‘Edtech is no longer optional’: Investors’ deep dive into the future of the market

Natasha Mascarenhas picked up TechCrunch’s edtech beat when she joined us just before the pandemic. Twelve months later, she’s an expert on the topic.

In July, she surveyed six edtech investors to “get into the macro-impact of rapid change on edtech as a whole.”

- Ian Chiu, Owl Ventures

- Shauntel Garvey and Jennifer Carolan, Reach Capital

- Jan Lynn-Matern, Emerge Education

- David Eichler, TCV

- Jomayra Hererra, Cowboy Ventures

6. B2B marketplaces will be the next billion-dollar e-commerce startups

In 2018, B2B marketplaces saw an estimated $680 billion in sales, but that figure is expected to reach $3.6 trillion by 2024.

As companies shifted their purchasing online, these platforms are adding a range of complementary services like payment management, targeted advertising and logistics while also hardening their infrastructure.

7. Facebook’s former PR chief explains why no one is paying attention to your startup

Caryn Marooney, right, vice president of technology communications at Facebook, poses for a picture on the red carpet for the 6th annual 2018 Breakthrough Prizes at Moffett Federal Airfield, Hangar One in Mountain View, Calif., on Sunday, Dec. 3, 2017. Image Credits: Nhat V. Meyer/Bay Area News Group

Reporter Lucas Matney spoke to Caryn Marooney in August at TechCrunch Early Stage about how startup founders who hope to expand their reach need to do a better job of connecting with journalists.

“People just fundamentally aren’t walking around caring about this new startup,” she said. “Actually, nobody does.”

Speaking as someone who’s been on both sides of this equation, I most appreciated her advice about focusing on “simplicity and staying consistent” when it comes to messaging.

“Don’t let the complexity of your intellect cloud what needs to be simple,” she said.

8. You need a minimum viable company, not a minimum viable product

In a guest post for Extra Crunch, seed-stage VC Ann Miura-Ko shared some of what she’s learned about “the magic of product-market fit,” which she termed “the defining quality of an early-stage startup.”

According to Miura-Ko, a co-founding partner at Floodgate, startups can only reach this stage when their business model, value propositions and ecosystem are in balance.

Using lessons learned from her portfolio companies like Lyft, Refinery29 and Twitch, this article should be required reading for every founder. As one commenter posted, “I read this thinking, ‘I need to add some slides to my deck!’”

9. 6 investment trends that could emerge from the COVID-19 pandemic

10 January 2020, Berlin: Doctor Olaf Göing, chief physician of the clinic for internal medicine at the Sana Klinikum Lichtenberg, tests mixed-reality 3D glasses for use in cardiology. They can thus access their patients’ medical data and visualize the finest structures for diagnostics and operation planning by hand and speech. The Sana Clinic is, according to its own statements, the first hospital in the world to use this novel technology in cardiology. Image Credits: Jens Kalaene/picture alliance via Getty Images

During “the early innings of this period of uncertainty,” an article we published offered several predictions about investor behavior in the U.S.

Although we posted this in April, each of these forecasts seem spot-on:

- Future of work: promoting intimacy and trust.

- Healthcare IT: telemedicine and remote patient monitoring.

- Robotics and supply chain.

- Cybersecurity.

- Education = knowledge transfer + social + signaling.

- Fintech.

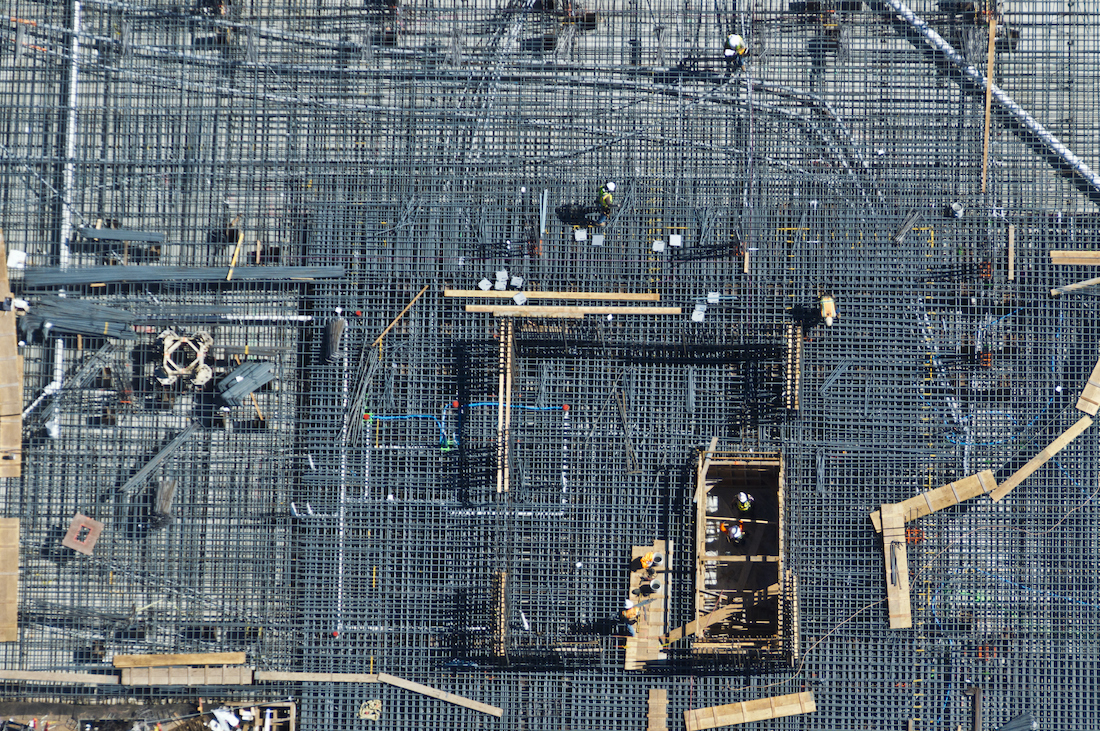

10. Construction tech startups are poised to shake up a $1.3-trillion-dollar industry

I’ve always found the concept of total addressable market (TAM) hard to embrace fully — the arrival of a single disruptive company could change an industry’s TAM in a week.

However, several factors are combining to transform the construction industry: high fragmentation, poor communication, a skilled labor shortage and a lack of data transparency.

Startups that help builders manage aspects like pre-construction, workflow and site visualization are making huge strides, but because “construction firms spend less than 2% of annual sales volume on IT,” the size of this TAM is not at all speculative.

11. Don’t let VCs be the gatekeepers of your success

As a bonus, I’m including a TechCrunch op-ed written by insurtech founder Kevin Henderson that describes the myriad challenges he has faced as a Black entrepreneur in Silicon Valley.

Some of the discussions about the lack of diversity in tech can feel abstract, but his post describes its concrete consequences. For starters: he’s never had an opportunity to pitch at a VC firm where there was another Black person in the room.

“Black founders have a better chance playing pro sports than they do landing venture investments,” says Henderson.